An enhanced predictive analytics modeling technique is provided in the Integrated Enterprise Excellence (IEE) business management system. In IEE, predictive analytics modeling scorecards are structurally linked with their processes through an IEE value chain.

An IEE value chain with its predictive analytics modeling scorecards provides the linkage of processes with the output performance measurements for these processes. Organizations gain much through an Integrated Enterprise Excellence (IEE)1 value chain. With this system, when a performance metric is not achieving its desired objective, the processes associated with that metric in the value chain need improvement.

When organizations use the IEE predictive analytics modeling technique, they address the common-place business scorecard and improvement issues that are described in a one-minute video:

Predictive Analytics Modeling Technique: IEE Value Chain and 9-step System

Content of this webpage is from Chapter 3 of the book Integrated Enterprise Excellence Volume III – Improvement Project Execution: A Management and Black Belt Guide for Going Beyond Lean Six Sigma and the Balanced Scorecard, Forrest W. Breyfogle III.

Creation of long-lasting business metrics will first be described and then the 30,000-foot-level scorecard tracking of these measurements will be discussed. It will be shown how, when a process is concluded to have a recent region of stability, a prediction statement can be made.

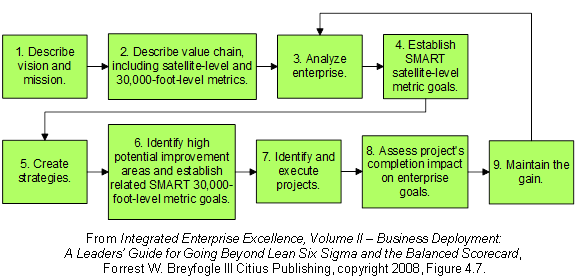

The Integrated Enterprise Excellence (IEE) system consists of 9 steps, as shown in Figure 1. Step two of the nine-step business management system shown in Figure 1 of this article states: “Describe value chain, including satellite-level (financial) and 30,000-foot-level (operational) metrics.” Elaboration will now be made on the value chain portion of this step.

Figure 1: Integrated Enterprise Excellence (IEE) Business Management system1

An organization’s IEE value chain, as illustrated in Figure 2 below, provides a visual representation of what the enterprise does (rectangles in the figure) and its performance measures of success (ovals in the figure), from a customer and business point of view; i.e., cost, quality, and time. In this value chain, the rectangular boxes provide clickable access to process steps, functional value streams, and procedural documents. The center series of rectangular-box-specified functions describe the primary business flow, and the rectangular boxes that are not in this series describe other support functions, e.g., legal and finance.

With this approach to describing the enterprise, the organization chart is subordinate to the value chain. The value chain is long-lasting even through organizational changes, where process functional procedures and their metrics can change over time.

Metrics within a value chain are to have alignment to how the business is conducted. This is in contrast to creating metrics around the organizational chart or strategic plan objectives, where both can significantly change over time. In addition, it is important not only to determine what should be measured but also to have a reporting methodology that leads to healthy behavior so that the organization as a whole benefits.

Figure 2: IEE Value Chain with Scorecard Metrics

Shaded areas designate processes that have sub-process drill-downs1

Predictive Analytics Modeling Technique: Creating Good Metrics

Good metrics provide decision-making insight that leads to the most appropriate conclusion and action or non-action. Measurements should have the following good-metric characteristics: business alignment, honest assessment, consistency, repeatability and reproducibility, actionability, time-series tracking, predictability, and peer comparability.

Organizations often report performance using a table of numbers, stack bar chart, or red-yellow-green report-outs, where red indicates that a goal/specification is not being met, and green indicates that current performance is satisfactory. These reporting formats do not have many of the aspects of the good-metrics characteristics that were previously described. In particular, all of these measurement formats do not provide a predictive component and can lead to expensive, non-productive firefighting behaviors. These reporting-format limitations can be overcome with a 30,000-foot-level reporting system, as described in the article 30,000-foot-level Reports with Predictive Measurements.

In 30,000-foot-level reporting, there are no calendar boundaries, and a prediction statement can be made, when appropriate. For example, one might report that a current metric performance level is predictable since the process has been stable for the last 17 weeks, and there is an estimated non-conformance rate of 2.2%. For predictable processes, we expect that this same level of non-conformance would occur in the future unless something is done to improve either the process inputs or the process-step-by-step execution itself.

With this form of reporting, common-cause variability is separated from special-cause events at a high-level. Also, with this 30,000-foot-level metric business perspective, typical variability from process input differences is considered common-cause input variability that should not be reacted to as though it were special-cause variability; e.g., variation from raw material lot-to-lot, day-of-the-week, people-to-people, and machine-to-machine differences.

Often, current metric reporting and management practices of determining what happened today by sending someone to “fix the problem” can lead to much firefighting. For this type of situation there are, more often than not, minimal improvements made from these firefighting activities; i.e., common-cause variability issues are treated as though they are special cause. Red-yellow-green scorecards, where there is a tracking to goals throughout an organization, can sound attractive but can lead to much firefighting.

To illustrate this point, consider the red-yellow-green continuous-data scorecard shown at the top of Figure 3, which is from a corporation’s actual scorecard system, and its comparison to a 30,000-foot-level scorecard reporting system. 30,000-foot-level metric reporting system has two steps. The first step of this process is to analyze for predictability. The second step is the formulation of a prediction statement, when the process is considered predictable.

Figure 2: Comparison of a red-yellow-green scorecard to 30,000-foot-level predictive measurement reporting (Histogram included for illustrative purposes only).1

To determine predictability, the process is assessed for statistical stability using a 30,000-foot-level individuals control chart, which can detect if the process response has changed over time and/or if it is stable.

When there is a current region of stability, data from this last region can be considered a random sample of the future. For this example, note how the 30,000-foot-level control chart in Figure 3 indicates that nothing fundamental in the process has changed, even though a traditional red-yellow-green scorecard showed the metric frequently transitioned among red, yellow, and green. For the traditional scorecard, the performance level was red 5 out of the 13 recorded times.

Included in this figure is a probability plot that can be used to make a prediction statement. Much can be learned about a process through a probability plot. Let’s next examine some of these probability-plot-benefit characteristics.

The x-axis in this probability plot is the magnitude of a process response over the region of stability, while the y-axis is percent less than. A very important advantage of probability plotting is that data do not need to be normally distributed for a prediction statement to be made. The y-axis scale is dependent upon the distribution type; e.g., normal or log-normal distribution.

If the data on a probability closely follow a straight line, we act as though the data are from the distribution that is represented by the probability plot coordinate system. Estimated population percentages below a specification limit can be made by simply examining the y-axis percentage value, as shown in Figure 2. For this case, we estimate that about 33% of the time, now and in the future, we will be below our 2.2 specified criterion or goal.

There is a certain amount of technical training needed to create 30,000-foot-level metrics (chapters 12 and 13 of reference 2); however, the interpretation of the chart is quite simple. In this reporting format, a box should be included below the chart that makes a statement about the process. For this chart, we can say that the process is predictable with an approximate non-conformance rate of 32.8%. That is, using the current process, the metric response will be below the goal of 2.2 about 1/3 of the time.

Regarding business-management policy, red-yellow-green versus this form of reporting can lead to very different behaviors. For this example, a red-yellow-green reporting policy would lead to fighting fires about 33% of the time because every time the metric turned red, management would ask the questions, “What just occurred? Why is our performance level now red?” while in actuality the process was performing within its predictable bounds. Red-yellow-green scorecards can result in counter-productive initiatives, 24/7 firefighting, the blame game, and proliferation of fanciful stories about why goals were not met. In addition, these scorecards convey nothing about the future.

With this form of performance metric reporting, we gain the understanding that the variation in this example is from common-cause process variability and that the only way to improve performance is through improving the process itself. With this system, someone would be assigned to work on improving the process that is associated with this metric. This assumes that this metric improvement need is where efforts should be made to improve business performance as a whole.

In organizations, the value-chain functions and metrics should maintain basic continuity through acquisitions and leadership change. The value chain with its 30,000-foot-level metric reporting can become the long-lasting front end of a system and baseline assessment from which strategies can be created and improvements made.

With the Integrated Enterprise Excellence approach, strategies are analytically/innovatively determined in step five of the nine-step business-management-system, as shown in Figure 1. The well-defined strategies created with this enhanced management system lead to targeted improvement or design projects that benefit the enterprise as a whole.

The references and links below provide more information about the IEE value chain with predictive scorecards.

Predictive Analytics Modeling Technique: Enhancements to Traditional Business Practices

The following articles provide information about enhancements to traditional strategic planning and its execution:

- Enhanced Business Management System: Descriptive Videos

- Project Selection with Whole-enterprise Benefit

References

- Forrest W. Breyfogle III, Integrated Enterprise Excellence Volume II – Business Deployment: A Leaders’ Guide for Going Beyond Lean Six Sigma and the Balanced Scorecard, Bridgeway Books/Citius Publishing, 2008

- Forrest W. Breyfogle III, Integrated Enterprise Excellence Volume III – Improvement Project Execution: A Management and Black Belt Guide for Going Beyond Lean Six Sigma and the Balanced Scorecard, Bridgeway Books/Citius Publishing, 2008

Contact Us to set up a time to discuss with Forrest Breyfogle how your organization might gain much from Integrated Enterprise Excellence (IEE) predictive analytics modeling technique.